How to draft contracts responsibly with AI assistance

Legal teams across the country are discovering a hard truth: AI-assisted contract drafting can cut hours of work down to minutes, but a single unverified output can introduce errors that survive all the way to execution. One in-house team recently relied on an AI tool to generate a vendor agreement, only to find that the indemnification clause cited a statute that did not exist. The clause passed two internal reviews before a partner caught it. That scenario is not an outlier. It is a preview of what happens when speed outpaces process.

Table of Contents

- Understanding your duties: competence, confidentiality, and supervision

- Preparation: building a workflow for responsible AI contract drafting

- The contract drafting process: responsible AI integration step by step

- Troubleshooting and mitigating common risks: hallucinations, edge cases, and compliance failures

- Why treating AI as an assistant, not an oracle, changes outcomes

- Explore responsible, source-linked AI contract workflows

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Human verification is essential | Always treat AI-generated drafts as unverified until thoroughly reviewed by a legal expert. |

| Understand legal duties | Lawyers must apply competence, confidentiality, and supervision when integrating AI tools. |

| Audit and document workflows | Maintain traceable records of AI prompts, reviewers, and contract changes for compliance and defensibility. |

| Target common failure points | Focus review on citations, statutes, jurisdictional clauses, and possible hallucinations. |

| Structured contracts with AI vendors | Govern data use, risk, and audit rights contractually when using third-party AI tools. |

Understanding your duties: competence, confidentiality, and supervision

Before any AI tool touches a contract, legal professionals need to be clear on what professional responsibility actually requires. The rules have not been rewritten for AI. They have been applied to it.

ABA Formal Opinion 512 frames generative AI as a technology lawyers must use competently under existing duties, including confidentiality, supervision, and verification of work product. That framing matters. It means the lawyer who runs a prompt and pastes the output into a contract draft is still the professional responsible for every word in that document.

The core duties that apply when using AI in contract drafting include:

- Competence: You must understand enough about how the AI tool works to identify when it is likely to fail, not just when it succeeds.

- Confidentiality: Client data uploaded to a third-party AI platform may be retained, used for model training, or exposed to breach. Duty of confidentiality requires you to understand those risks before you input anything.

- Supervision: If a junior associate or paralegal is running AI-assisted drafts, the supervising attorney is responsible for the output as if they had written it personally.

- Verification: AI-generated text is an unverified draft. Period. It must be treated that way at every stage.

“Lawyers remain responsible for all work product, regardless of the tool used to generate it. Generative AI does not transfer or dilute professional responsibility.”

Starting with a firm internal policy that encodes these duties into your workflow is not optional. It is the foundation. Platforms built around AI drafting principles can help teams operationalize these obligations rather than leaving them as abstract reminders on a compliance checklist.

Preparation: building a workflow for responsible AI contract drafting

Knowing your duties is the starting point. Building a workflow that actually enforces them is the harder work. Most teams that run into trouble with AI-assisted drafting do not fail because they ignored ethics rules. They fail because they never translated those rules into concrete process steps.

A responsible contract-drafting workflow should operationalize review gates: treat AI text as unverified drafts and require human verification before release or reliance. That is not a suggestion. It is the minimum standard for defensible practice.

Before your team drafts a single AI-assisted contract, work through this preparation checklist:

- Policy layer: Draft and adopt an internal AI use policy that specifies which tools are approved, what data can be shared, and who is authorized to use AI for which tasks.

- Tool selection: Choose platforms that offer source tracking, audit logs, access controls, and clear data retention policies. Avoid tools that cannot tell you where a clause came from.

- Vendor agreements: Responsible use can include structured vendor contracting and clause-level governance for model training, data rights, liability, and auditability. If your AI vendor agreement does not address these points, negotiate them before you go live.

- Role assignments: Designate who generates AI drafts, who reviews them, and who has final sign-off authority. These should be different people.

- Training: Every team member using AI tools should understand common failure modes, especially hallucinations, before they use the platform on live matters.

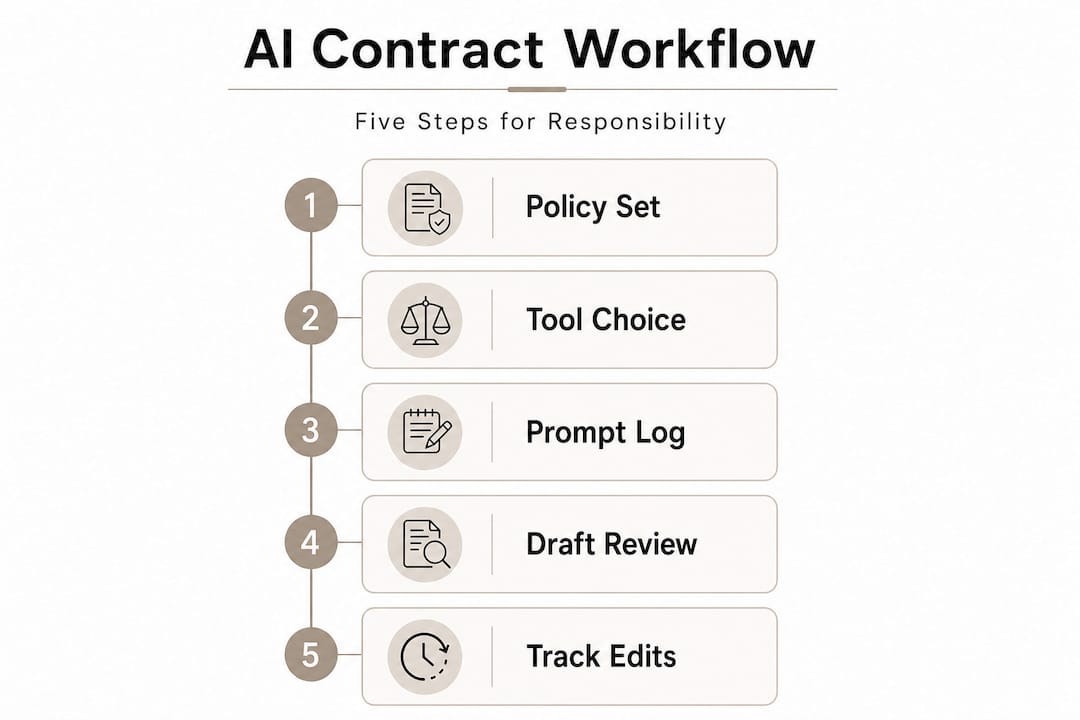

Here is a practical overview of what a governed AI drafting workflow looks like across key dimensions:

| Dimension | What to address | Review checkpoint |

|---|---|---|

| Tool selection | Source tracking, privacy, audit logs | Before onboarding |

| Prompt design | Specificity, jurisdiction, clause type | Before generation |

| Output review | Accuracy, citations, legal fit | After generation |

| Vendor contract | Data rights, liability, model training | Before contract signing |

| Audit records | Prompts, reviewers, outcomes | Ongoing |

Pro Tip: Maintain a running log of every AI-assisted contract task, including the prompt used, the reviewer assigned, and the outcome of the verification check. This log is your best defense if a client or regulator ever questions your process. It also builds institutional knowledge about which prompt patterns produce reliable results and which ones need refinement.

Building AI governance in legal workflows from the ground up takes effort, but it pays off the first time a contract dispute arises and you can show a clear, documented chain of human oversight.

The contract drafting process: responsible AI integration step by step

Preparation is complete. Now the actual drafting work begins. The steps below reflect a process that is both efficient and defensible. Skipping steps, especially the verification gates, is where teams get into trouble.

- Define the scope. Before opening any AI tool, document the contract type, governing law, jurisdiction, key parties, and any non-standard terms. This scope document becomes the basis for your prompt and your review checklist.

- Select a template or precedent. AI performs better when it is working from a known structure. Start with a firm-approved template or a jurisdiction-specific precedent. Feed that context into the AI rather than asking it to generate from scratch.

- Craft a structured prompt. Specify the governing law, jurisdiction, clause type, and any specific obligations or carve-outs. Vague prompts produce vague contracts.

- Generate the draft. Run the prompt and capture the full output, including any source citations the tool provides. Do not edit yet.

- Human review gate. A qualified reviewer reads the entire draft against the scope document. This is not a skim. It is a line-by-line review with the original template open for comparison.

- Verification pass. Every cited statute, case, or regulatory reference is independently verified against primary sources. Every jurisdictional claim is checked. Every defined term is confirmed for consistency.

- Release or return for revision. If the draft passes verification, it moves forward. If it fails, it goes back for targeted revision, not a fresh AI generation without understanding what went wrong.

Empirical benchmarking suggests some AI tools can match or outperform humans on limited drafting tasks, but human verification remains necessary because accuracy is not perfect and risk varies significantly by use case. A high-performing AI on a standard NDA is not the same as a high-performing AI on a cross-border acquisition agreement with multi-jurisdictional regulatory hooks.

Here is how the three drafting approaches compare across key risk and efficiency dimensions:

| Factor | Manual drafting | AI-assisted, no verification | AI-assisted with verification |

|---|---|---|---|

| Speed | Slow | Fast | Moderate |

| Citation accuracy | High | Variable | High |

| Hallucination risk | None | High | Low |

| Defensibility | High | Low | High |

| Efficiency gain | Baseline | High but risky | Significant and safe |

The middle column is the trap. Teams that adopt AI for speed but skip verification get the worst of both worlds: fast drafts with hidden errors and no audit trail to show they checked.

Pro Tip: Use structured prompts that specify the exact clause type, governing law, and any regulatory framework that applies. For example: “Draft a limitation of liability clause governed by New York law for a software-as-a-service agreement between two commercial entities, excluding consequential damages.” That level of specificity produces outputs that are far easier to verify against a reliable contract drafting workflow.

Troubleshooting and mitigating common risks: hallucinations, edge cases, and compliance failures

Even well-designed workflows encounter problems. The goal is not to eliminate all AI error, which is not currently possible. The goal is to catch errors before they matter.

The most common failure modes in AI-assisted contract drafting include:

- Hallucinated citations: The AI invents a statute, case, or regulatory reference that does not exist or cites a real source for a proposition it does not actually support.

- Blended legal concepts: The AI merges standards from different jurisdictions or legal frameworks, producing a clause that looks coherent but applies the wrong legal test.

- Jurisdiction mismatches: The AI applies default assumptions from one legal system (often federal or common law) to a contract that requires state-specific or civil law treatment.

- Outdated authority: The AI cites a statute that has been amended or a case that has been overruled, because its training data has a cutoff date.

- Inconsistent defined terms: The AI uses the same term with slightly different meanings across different sections of the same contract.

Edge cases that commonly break AI-assisted drafting include jurisdiction-specific clauses, citation accuracy, and hallucinated authority. Responsible workflows explicitly target these failure modes rather than hoping they do not appear.

“Never trust, always verify.” The NCSC AI Hallucination Guide identifies hallucinations such as fabricated citations, distorted holdings, false procedural information, and blended legal concepts as categories that require systematic human verification, not spot-checking.

To audit AI-generated contract outputs effectively, follow this sequence:

First, run every statutory citation against the current version of the relevant code. Do not assume the AI has the right section number. Second, verify every case citation by pulling the actual decision and confirming the proposition the AI attributed to it. Third, compare jurisdictional assumptions in the draft against the governing law clause. Fourth, check defined terms for internal consistency across the entire document. Fifth, flag any clause that the reviewer cannot independently verify against a primary source.

Pro Tip: For sensitive matters, preserve your prompt logs and review records as part of the matter file. If a contract is later disputed and opposing counsel questions whether AI was used responsibly, your audit trail is the evidence that professional standards were met. This is also good practice for contract verification strategies that hold up under regulatory scrutiny.

The legal and reputational risks of missed errors are not hypothetical. A fabricated statute in a governing law clause can render a provision unenforceable. A jurisdiction mismatch in a choice of law clause can expose a client to litigation in an unexpected forum. These are not edge cases. They are the predictable results of treating AI output as finished work.

Why treating AI as an assistant, not an oracle, changes outcomes

Here is the uncomfortable reality that most AI adoption conversations in legal practice avoid: the confidence of AI output is not correlated with its accuracy. An AI tool will state a fabricated statute in exactly the same tone and format as a real one. It will blend two incompatible legal standards without any indication that something is wrong. That surface confidence is the actual danger, not the tool itself.

A defensible approach is treating AI as a drafting assistant, not a legal oracle. That framing requires competence, confidentiality, supervision, and verification gates at every stage of the process.

The conventional wisdom in legal tech circles is that AI adoption is primarily a change management problem. Get lawyers comfortable with the tools, and the rest follows. That framing is wrong in a specific and important way. Comfort with AI tools without structured verification is not progress. It is risk accumulation at scale.

The teams that get the most sustainable value from AI-assisted drafting are not the ones who use AI the most. They are the ones who have built the clearest processes for when to trust AI output and when to override it. They treat every AI-generated draft as a starting point written by a very fast, very confident, and occasionally wrong junior associate. That mental model keeps the lawyer in the verification role rather than the passive approval role.

A practical discipline worth building into your team’s culture is the contract postmortem. When an AI-assisted contract reveals an error during review, or worse, after execution, document what happened. What prompt was used? What review step missed the error? What would have caught it earlier? Those lessons, accumulated over time, are what turn a generic AI workflow into a firm-specific, continuously improving responsible AI drafting perspective.

The teams that skip postmortems are the ones who repeat the same errors. The teams that institutionalize them build a genuine competitive advantage in quality and defensibility.

Explore responsible, source-linked AI contract workflows

Applying these principles consistently requires more than good intentions. It requires a platform built to support them.

Jarel is built specifically for legal professionals who need AI assistance that stays connected to its sources. Every output in Jarel’s workspace is linked back to the underlying contract language, statute, or case law that informed it, so your review process has something concrete to verify against. The platform includes audit logs, access controls, and review trails that make the kind of governed workflow described in this article practical rather than theoretical. For teams ready to move from ad hoc AI use to a structured, compliant drafting process, Jarel’s responsible AI platform provides the infrastructure to do it right.

Frequently asked questions

What is the biggest risk of using AI in contract drafting?

The major risk is relying on AI-generated content without human verification, which can lead to hallucinated citations or factual errors slipping into executed contracts. NCSC describes hallucination categories including fabricated case citations, distorted holdings, false procedural information, and blended legal concepts across jurisdictions.

Are lawyers allowed to use AI under ABA Model Rules?

Yes, lawyers can use AI if they maintain competence, confidentiality, supervision, and proper verification over the work product. ABA Formal Opinion 512 frames generative AI as a technology lawyers must use competently under existing professional duties.

Do AI benchmarks mean human review is unnecessary?

No, even top-performing AI tools require human verification, especially for high-risk contract clauses and citations. Empirical benchmarking suggests some AI tools can match or outperform humans on limited drafting tasks, but accuracy is not perfect and risk varies significantly by use case.

What contract terms should be in AI vendor agreements?

Include provisions for data rights, liability allocation, model training restrictions, auditability, and compliance with applicable regulatory frameworks. Structured vendor contracting with clause-level governance for these issues is a recognized component of responsible AI use in legal practice.